AI is bigger than anything in tech over the last 30 years (at least). Add the hype, and it’s easy to believe you’re behind on technology.

From what we saw (especially in Germany), you have a data problem, not an AI problem.

Data-ready, not AI-ready.

That sounds counterintuitive in a market where AI adoption is clearly spiking. In June 2025, IFO found that 40.9% of companies in Germany were already using AI in their business processes, with another 18.9% planning to start soon.

You must be behind your competitors…

But Germany’s own Federal Government Data Strategy says that a lot of valuable data still goes unused. Some areas collect too little data or data of insufficient quality, and much of it cannot be found, accessed, interoperated, or reused properly.

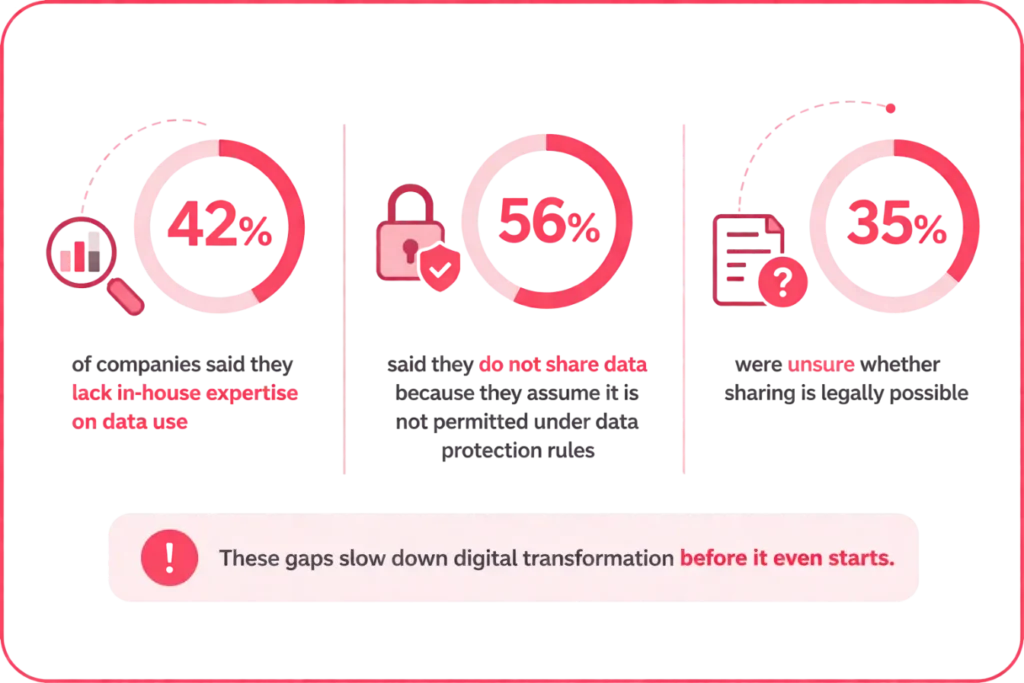

In the same document, 42% of companies said they lack in-house expertise on data use, while 56% said they do not share data because they assume it is not permitted under data protection rules. Another 35% were unsure whether sharing is legally possible.

Sure, buy the software, discuss AI…

But if your data is trapped in disconnected systems, just keep that one $20 ChatGPT subscription active.

Data readiness is what makes a digital system usable

Most digital transformation discussions still treat data as a technical layer.

In reality, it is much closer to plumbing. If the pipes are broken, the water doesn’t flow. You can install a new internal AI assistant on top of such plumbing, but if the data is bad, the output will still be slow, manual, and error-prone.

That is why data readiness should be treated as a prerequisite.

Germany’s current picture reflects that tension. On one hand, there is a clear digital ambition. On the other hand, the foundations are uneven.

In 2023, as reported by Eurostat, only 43.6% of German enterprises used integrated business software such as ERP, CRM, or BI, compared with 49.9% across the EU.

In the public sector, Germany scored 75.8 out of 100 for digital public services for citizens and 78.6 for businesses, both below EU averages and far behind Estonia’s 95.8 and 98.9.

This is not just a story about nicer state portals. It is a story about whether the data underneath those services is standardised, connected, and reusable.

We spoke to leaders who assume the hard part is only in choosing the right system. Often, the harder part is making the organisation legible to that system.

5 simple checklists to assess data readiness

These checklists are designed to help leadership teams spot the data issues that make a bad foundation for digital transformation or AI implementation.

It is not a technical audit. It is just a practical way for non-techy leaders to judge whether the business has the data foundations needed for serious results.

To use this correctly, score each statement from 0 to 2 in each checklist.

- 0 = mostly not true

- 1 = partly true / inconsistent

- 2 = clearly true across the business

How to interpret the score?

- 10–12 — strong foundation

- 7–9 — usable, but uneven

- 4–6 — clear business risk

- 0–3 — serious weakness

1. Data completeness self-evaluation checklist

| Self-check question | What “good” looks like | Score (0–2) |

| Have we clearly defined which data fields are essential for each critical process? | The business knows exactly which fields must be filled for key workflows such as sales, delivery, finance, service, or compliance. | 0-2 |

| Are those required fields usually filled before work moves to the next step? | Teams rarely need to stop and chase missing information before approving, shipping, invoicing, or reporting. | 0-2 |

| Are missing data points the exception rather than a normal part of work? | People are not constantly relying on emails, calls, or side spreadsheets to fill gaps later. | 0-2 |

| Can leadership trust that reports and dashboards are based on sufficiently complete data? | Leaders do not have to question whether major parts of the picture are missing before making decisions. | 0-2 |

| Are completeness issues caught early, at the point of entry or early in the workflow? | Missing information is flagged before it becomes a delivery, billing, reporting, or customer issue. | 0-2 |

| Is it clear who is responsible for making sure critical data is captured and maintained? | Ownership is visible, and incomplete records do not float around without accountability. | 0-2 |

2. Data scatteredness self-evaluation checklist

| Self-check question | What “good” looks like | Score (0–2) |

| Do we know where our most important business data actually lives? | Leadership can clearly name the main systems, files, or teams holding core data such as customer, operational, financial, or service data. | |

| Is critical data stored in a manageable number of trusted systems rather than spread across many tools? | The business is not relying on a patchwork of emails, local files, spreadsheets, and disconnected platforms to run core processes. | |

| Can teams access the data they need without repeatedly asking other departments for it? | Work does not slow down because people have to chase information across functions or wait for someone else to export it. | |

| Is there one clear source of truth for each critical dataset? | Teams know which system or dataset should be trusted when numbers, records, or statuses do not match. | |

| Do systems share data well enough to avoid constant re-entry or manual copying? | People are not spending large amounts of time retyping, reconciling, or moving the same data between tools. | |

| When data is duplicated across systems, is it intentionally managed rather than left to drift? | Copies are controlled, synced, and understood — not left to become different versions of the same reality. |

3. Data timeliness self-evaluation checklist

| Self-check question | What “good” looks like | Score (0–2) |

| Is critical data usually entered at the point when the work happens, not much later? | Teams record key information during or immediately after the activity, not days later from memory. | |

| Does the business get important data in time to act on it? | Operational, financial, customer, or service data arrives early enough to support decisions, not after the moment has passed. | |

| Are delays in data entry the exception rather than a normal part of work? | People do not regularly postpone updates until the end of the day, week, or month. | |

| Do workflows and decisions depend on current data rather than outdated snapshots? | Teams are working from up-to-date information, not old exports, stale reports, or manually updated files. | |

| Are the biggest bottlenecks in timely data entry clearly visible? | The business knows where delays usually happen — by team, system, or process step. | |

| Is someone accountable for making sure time-sensitive data is entered on time? | Timeliness is owned, tracked, and treated as part of operational discipline rather than a nice-to-have. |

4. Data consistency self-evaluation checklist

| Self-check question | What “good” looks like | Score (0–2) |

| Are key data fields defined in the same way across teams and systems? | The business uses the same meaning for core terms such as customer, order, lead, case, product, or status. | |

| Is the same type of information entered in a standard format? | Dates, names, categories, addresses, statuses, and IDs follow agreed rules rather than personal habits. | |

| Do teams avoid creating their own versions of the same data field or label? | Departments are not using different names, drop-down values, or classifications for the same thing. | |

| When data moves between systems, does it stay consistent rather than change meaning or structure? | Information remains aligned across tools instead of being transformed, shortened, duplicated, or misclassified. | |

| Can leadership trust that the same metric means the same thing in different reports? | Reports from different functions do not contradict each other because they use different definitions or input rules. | |

| Is there clear ownership for setting and maintaining data standards? | Someone is responsible for keeping key definitions, formats, and rules aligned across the business. |

5. Data ownership self-evaluation checklist

| Self-check question | What “good” looks like | Score (0–2) |

| Is there a clearly named owner for each critical dataset? | The business can point to a specific role or person responsible for key data such as customer, financial, operational, or service data. | |

| Do data owners know what they are responsible for? | Ownership is not symbolic. It includes clear responsibility for quality, access, updates, and issue resolution. | |

| Is it clear who can decide how a dataset is defined, changed, or used? | Teams do not argue informally about data rules because decision rights are already understood. | |

| Are data issues routed to the right owner quickly? | When something is missing, wrong, duplicated, or unclear, people know exactly who should address it. | |

| Does ownership continue after system implementation, not stop at go-live? | Data remains actively managed as part of business operations, not treated as a one-time project task. | |

| Are owners held accountable for keeping critical data fit for use? | Ownership is tied to real expectations, follow-through, and business outcomes, not just a name on paper. |

Technology moves faster than data discipline

New systems can not create order that the business itself has not yet created in its data.

That is what these checklists are meant to help with. It gives leadership a simple way to test whether the foundation is actually strong enough for digital systems, automation, and AI to work as intended.

Not in theory, but in day-to-day operations where missing fields, scattered records, late updates, conflicting definitions, and unclear ownership quietly slow everything down.

The point is to become honest about where the friction really is. Because once those weaknesses are visible, they start looking like what they really are: operational risks, decision risks, and transformation risks.

Similar insights

Minimum Viable Data: The standard all digital systems need

19/05/2026

AI is a great cost-saver – Until it’s not!

13/05/2026

Are your people ready for transformation? A self-evaluation checklist

15/04/2026

Who Owns What in Change Management for Digital Transformation

18/03/2026

“Why Do We Pay for Analysis?” an Answer for Skeptical Executives

11/03/2026

Inside Europe’s Cybersecurity Skill Shortage in 2026

17/02/2026

Which Software Development Process Is Best for Your Company?

10/02/2026

How To Know If Your Business Is Ready To Scale Internationally

21/01/2026

8 Hybrid Commerce Mistakes Companies Repeat in 2026

13/01/2026

Let the success

journey begin

Our goal is to help take your organization to new heights of success through innovative digital solutions. Let us work together to turn your dreams into reality.